I recently made some changes to the website. I decided to write about them to help others do something similar on their personal static websites.

Search bar

I added a search bar to the home page of victorzhou.dev. This site is indexed by Search My Site. Search My Site indexes my website for me, and provides an API for me to implement a search bar on my own domain. The code for the search bar is just in a <script> tag, and can be found here. Search My Site has a very helpful blog post which I used to start writing this.

Filtering out category pages

I did also have to update some of the paths on my site to make the search more useful. The search was returning some of the category pages on my site (such as /transit/index.html), so a search for any word in an article title would return up to 2 extra links: a link to the category page, and a link to the home page, if the article was linked to on the home page.

I fixed this by taking advantage of how Apache serves index.html if no file name is specified. I've updated links to category pages and the home page to not link to a index.html URL. For example, the footer for this article might have previously looked like this

<footer> <a href="./index.html">Programming notes</a> <a href="../index.html">Home</a> </footer>

Now, it looks like this:

<footer> <a href="./">Programming notes</a> <a href="../">Home</a> </footer>

This makes it so that Search My Site indexes the category pages without an .html URL. The article pages are still indexed with an .html URL. This means that the search can just filter for html URLs. This is done by just filtering out non-HTML urls in the frontend (code). However, writing this article, I discovered that Search My Site will allows developers to filter URLs too, so I will update the search query to take advantage of this immediately. As in, it will already be live, and here is the commit.

Testing in production

It was hard for me to test the search functionality locally, as Search My Site only allows the domain being searched to access the API in a fetch request. The code seems to try to enable localhost testing, but it didn't work for me. Maybe I'm misinterpreting what line 105 is trying to accomplish. As a result, while testing locally, all I could do was click the button and see what the blocked GET request looked like. This wasn't effective at ensuring that search results were rendered correctly. As a result, I had to do deploy the website and test on victorzhou.dev.

Setting up Gcore CDN

I wanted to set up a CDN for the images on this site. Previously, images were served directly from S3 with an S3 url, but it felt cleaner to serve assets from a victorzhou.dev URL. There are also performance advantages with a CDN, but these were just a bonus for me, as I don't think there is particularly high load to this website.

I didn't want to use an Amazon CDN or Cloudflare for personal reasons. I just searched for free CDNs and I ended up on Gcore. I liked that they were based in the EU, and were therefore subject to probably-better privacy laws than other jurisdictions.

The setup turned out to be really straight-forward. This is the guide I used. You just set up a CDN resource in Gcore, point it to your S3 bucket, and set up a CNAME record. It even sets up https on the CDN subdomain you configure automatically.

It did take some time for the CNAME records to propogate so that the Let's Encrypt certificate could be created. The UI does warn you about this, but I saw a number of different error messages during the process, so I got confused and restarted the certificate generation in the UI a couple of times. It was pretty low effort to click "cancel" then "generate" though, so no big deal. I could have avoided this "problem" by just waiting for the DNS to propogate properly.

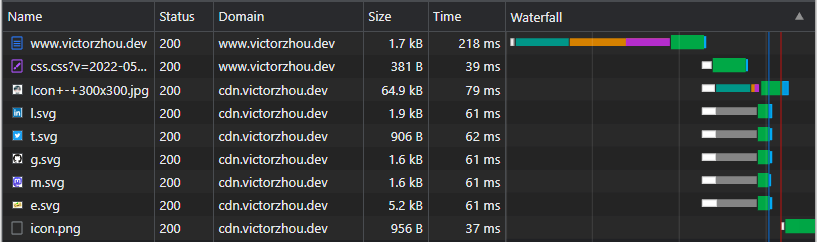

Here's what the pageload waterfall for my homepage looks like with Gcore CDN serving the image assets. It looks fine to me. I did probably warm up the CDN by trying to get this waterfall screenshot. For reference, I am located in Seattle, USA with fiber internet.